danah boyd

[ny, ny]

danah has been a huge influence.. see below.

her book, it’s complicated, comes out feb 25, 2014:

book links to amazon

book site:

[note: available on site linked above – free pdf download]

_______________________

highlights/notes:

By and large, the kids are all right. But they want to be understood.

_______________________

notes/resources on it’s complicated via hastac:

________________________

http://www.crisistextline.org/

_______________________

danah on tour:

question/answer at 20 min – huge.. danah – i’m more worried about the ones that are not privileged being misunderstood than those privileged – not getting in… ness. [story of uni asking danah why kid would lie to them about not being in gangs… when his online presence showed otherwise.. her response – what makes you think he’s lying to you.. it’s survival…]

the danger of just one story ness..

31 min – question on “fixing” inequality – danah – tech/online is exposing what we have created offline… don’t think tech is our first effort at fixing… we have done stranger danger to our kids offline.. and that mindset can be seen differently online.. so tech will be helpful.. but need to address underlying – already in existence – issues.. ie: kids online represent existing politics..(paraphrasing all this)

35 min – question on inability to focus w/tech.. danah – talking about our set up of compulsory ed.. is focusing on one task actually in our best interest..? our best skill? – is it ideal? it depends on what we value.. and.. on being able to focus.. it’s almost like adults are far worse at this than teenagers..

38 min – spot on – on timeliness/sleep… we blame kids for getting online late on night – rather than questioning our practice of sending kids to school at an ungodly hour (ie: after 15 hrs of doing the right thing at school at all.. they crave down time online – connecting with friends)

***40 min – talks about her experience where she was told to go find data that fits our beliefs…

47 min – snapchat – you pay attention because you know it’s going to disappear

48 min – the idea of everyone moving to one service (whatsapp/facebook) – really questionable..

50 min – question – a lot has changed in tech – but nothing has really changed in human behavior – danah – how important human contact is… we have focused so heavily on structuring.. that we miss learning… and learning is embedded in connections.. learning to relate to others, have empathy is hard.. how do we as a society create the environment for youth to encounter all kinds of interactions.. the need to allow exploration.. i don’t think tech can drive this.. if we want them to be engaged…responsible/giving/caring… we need to invite them into the public world long before they run off to college

what about tech grounding the chaos for the being in the public world – via daily curiosity – rather than in school…

_______________________

danah’s new research institute 2014:

l

In under six weeks, our amazing team produced six guiding documents and crafted a phenomenal event called The Social, Cultural & Ethical Dimensions of “Big Data.” On our conference page, you can find an event summary, videos of the sessions, copies of the workshop primers and discussion notes, a zip file of important references, and documents that list participants, the schedule, and production team.

Navigating the messiness of “big data” requires going beyond common frames of public vs. private, collection vs. usage.

_____________________

danah at sxsw 2014:

..this is why I struggle with these tools; they mirror and magnify the good, bad, and ugly. We use this visibility to panic rather than using it to figure out new ways of helping young people.

_______________________

_______________________

Peter Gray reviews it’s complicated:

_________________________

nov 2014 on net neutrality et al:

https://medium.com/message/net-neutrality-is-sooo-much-more-than-access-to-the-tubes-2344b1e9f220

_________________________

her sites: danah.org and apophenia via zephoria and wikipedia

just meeting up with these people via plenary panel of dmlconference 2013.

http://dmlcentral.net/blog/s-craig-watkins/dml-conference-2013-democratic-futures

Plenary Session #1: “Remixing Citizenship, Remaking Democracy” w/ Craig Watkins, danah boyd, Astrid Silva, Biko Baker, Cathy Cohen

______________

research from 2009:

http://www.danah.org/papers/2009/WhiteFlightDraft3.pdf

______________

danah is amazing.. if you want to find/follow someone seeking truth/authenticity.

perhaps start here:

or here:

______

i write about her influence on us here. she (and macarthurs work in general) have been a huge inspiration.. the idea to go where people are living/learning.. rather than fix/study them in assigned/designated spaces.

way back when i started gathering info on web use here – but my first impression was with danah boyd’s work.

danah boyd says that because of dateline – predators are now in more danger online than youth

_________________

One of the most crucial aspects of coming of age is learning how to navigate public life. The teenage years are precisely when people transition from being a child to being an adult. There is no magic serum that teens can drink on their 18th birthday to immediately mature and understand the world around them. Instead, adolescents must be exposed to — and allowed to participate in — public life while surrounded by adults who can help them navigate complex situations with grace. They must learn to be a part of society, and to do so, they must be allowed to participate.

listening (or not) with an agenda.. that’s more attuned with punishment than protection

our deep concern for protection – gets lost in the next shiny thing, we see shiny as a potential for salvation

_________________

[Kitra – need of youth to remove themselves from all things… that essentially feel fake]

________________

The media coverage focuses on how the posts that they are monitoring are public, suggesting that this excuses their actions because “no privacy is violated.” We should all know by now that this is a terrible justification. Just because teens’ content is publicly accessible does not mean that it is intended for universal audiences nor does it mean that the onlooker understands what they see. (Alice Marwick and I discuss youth privacy dynamics in detail in “Social Privacy in Networked Publics”.) But I want to caution against jumping to the opposite conclusion because these cases aren’t as simple as they might seem.

What became clear in this incident – and many others that I tracked – is that there are plenty of youth crying out for help online on a daily basis. Youth who could really benefit from the fact that their material is visible and someone is paying attentionUrban theorist Jane Jacobs used to argue that the safest societies are those where there are “eyes on the street.” What she meant by this was that healthy communities looked out for each other, were attentive to when others were hurting, and were generally present when things went haywire. How do we create eyes on the digital street

How do we do so in a way that’s not creepy? When is proactive monitoring valuable for making a difference in teens’ lives? How do we make sure that these same tools aren’t abused for more malicious purposes?

What matters is who is doing the looking and for what purposes. When the looking is done by police, the frame is punitive. But when the looking is done by caring, concerned, compassionate people – even authority figures like social workers – the outcome can be quite different. However well-intended, law enforcement’s role is to uphold the law and people perceive their presence as oppressive even when they’re trying to help. And, sadly, when law enforcement is involved, it’s all too likely that someone will find something wrong. And then we end up with the kinds of surveillance that punishes.

If there’s infrastructure put into place for people to look out for youth who are in deep trouble, I’m all for it. But the intention behind the looking matters the most. When you’re looking for kids who are in trouble in order to help them, you look for cries for help that are public. If you’re looking to punish, you’ll misinterpret content, take what’s intended to be private and publicly punish, and otherwise abuse youth in a new way.and otherwise abuse youth in a new way.

and otherwise abuse youth in a new way.and otherwise abuse youth in a new way.

The very practice of privacy is all about control in a world in which we fully know that we never have control.The very practice of privacy is all about control in a world in which we fully know that we never have control. Our friends might betray us, our spaces might be surveilled, our expectations might be shattered. But this is why achieving privacy is desirable. People want to be *in* public, but that doesn’t necessarily mean that they want to *be* public. There’s a huge difference between the two. As a result of the destabilization of social spaces, what’s shocking is how frequently teens have shifted from trying to restrict access to content to trying to restrict access to meaning. They get, at a gut level, that they can’t have control over who sees what’s said, but they hope to instead have control over how that information is interpreted.

he was enamored with how people worked and we argued over the need for rigor, the need for formal training..The whole point of a functioning democracy is to always question the uses and abuses of power in order to prevent tyranny from emerging.it hasn’t been about justice or national security. It’s been about power..not much is gained from reifying the us vs. them game that got us here. There has to be another way.

..the internet has been my saving grace.Today, I don’t have that luxury. My internet is painfully public...we haven’t enabled safe spaces to grow.

But I genuinely don’t know what it means to construct safe space for geeks in this configuration of the internet. I’m at a loss. This is why I think it needs innovation. And by innovation I mean more than a repurposing of existing technical blocks...it became a performance, not a community. Hell, I barely keep tabs on what some of my favorite people write about because all of the tools for managing the dynamic broke when it became big. And my blogging practice changed a lot as a result. For better and for worse. So I’m not sure that it is a conversation anymore, as much as a performance that serves as an opening for a conversation in some instances.

I’m not saying he’s wrong; I’m saying his story is incomplete and the incompleteness is important.There’s a reason why researchers and organizations like Pew Research are doing the work that they do — they do so to make sure that we don’t forget about the populations that aren’t already in our networks. …. This is precisely why and how the tech industry is complicit in the increasing structural inequality that is plaguing our society.

We live in a commercial society. I don’t like it and I think it’s unhealthy for everyone, but people don’t give youth enough credit. They’re working with the commercial realities because that’s what they’ve got.

I held his hand to show him how to draw, and he broke the crayon in half. I went to open the door and when I came back, he had figured out how to scribble…Learning to draw — on paper and with some sense of meaning — has a lot to do with the context, a context in which I help create, a context that is learned outside of the crayon itself.

But rather than seeing learning as a process and valuing educators as an important part of a healthy society, we keep looking for easy ways out of our current predicament, solutions that don’t involve respecting the hard work that goes into educating our young.

I wish I had a solution to our education woes, but I’ve been stumped time and again, mostly by the politics surrounding any possible intervention. Historically, education was the province of local schools making local decisions.

more conversation .. less surveillance

One of the perennial problems with the statistical and machine learning techniques that underpin “big data” analytics is that they rely on data entered as input. And when the data you input is biased, what you get out is just as biased. These systems learn the biases in our society. And they spit them back out at us.

[..]Our cultural prejudices are deeply embedded into countless datasets, the very datasets that our systems are trained to learn on.[..]We didn’t architect for prejudice, but we didn’t design systems to combat it either.[..]We are moving into a world of prediction. A world where more people are going to be able to make judgments about others based on data. Data analysis that can mark the value of people as worthy workers, parents, borrowers, learners, and citizens. Data analysis that has been underway for decades but is increasingly salient in decision-making across numerous sectors. Data analysis that most people don’t understand.

unless we change that.. ie: less on prediction more on living..

[..]

One of the most obvious issues is that the diversity of people who are building and using these tools to imagine our future is extraordinarily narrow. Statistical and technical literacy isn’t even part of the curriculum in most American schools. In our society where technology jobs are high-paying and technical literacy is needed for citizenry, less than 5% of high schools even offer AP computer science courses. Needless to say, black and brown youth are much less likely to have access let alone opportunities. If people don’t understand what these systems are doing, how do we expect people to challenge them?

what if that’s (school, citizenry as we know/practice it) the wrong approach.. and.. what if it’s perpetuating, ie: all these youth that keep calling crisis center. what if tech can go deeper than what we’re imagining..

Overview:

People have more access to more information than ever before. And those in power have more access to data about people than ever before. The ecosystem of networked information, colloquially referred to as “big data”, introduces a myriad of questions and challenges as the public grapples with privacy, networked sociality, and the politics of algorithms. In this talk, danah will weave together her research on young people’s practices of social media and the practices of “big data” to highlight challenges and opportunities in making sense of found data.

social data – on young people usig references (ie: coke) as encoded language… which made the data very dirty..on carmen – figuring out song mom won’t recognize but friends willencoding – the notion of hiding in plain siteon kids knowing that if brand names were in post… friends would see them more.. they were messing w/the algorithm in order to socializewhat are consequences of that messing..on target story – and how shopping changes for like 10 yrs after first birth… so went backwards.. to vitamins.. et alnot clear what the accuracy is.. what’s clear is that target has spent a ton of time on thiswhere’s the edge case of accuracy.. and obviously not accuracy… on getting trust..what are the small/subtler ramificationswhere they’re doing the interpretation of the bias of our society… and feeding them back at usdata comes about by: choice/circumstance/coercion – most is by circumstance – they just want to be a part of something social.. so can’t get them excited about privacy..the coercive end.. ie: incarceration/policing.. spit in the ? ie: collecting genetic material.. then use genetic analysis.. finding relatives.. we’re building out huge data bases of poc… what does it mean that a majority of data bases are being collected by ie: policecoerced also in a lot of social services..nsf – most rigorous folks.. but same techniques.. promises that are statistical unfactual.. ie: if you look at what personalized learning is promising… ie: gates/zuck… we don’t have proof of anything.. because data collected thus far has been coercive (school)on much being formalized mechanisms of discriminationin order for big data to go to hype to something useful.. need to look at social implications…as we invest in tech’s we need to invest in social considerations of them…q&aq: i’m asking my question on behalf of my toddler… ie: smart toys surrounding him.. capturing data… ie: smart barbie… voice recognition… convo.. sent back to store maker and 3rd party… and smart homes… smart cities…. how to better architect privacy by designa: children are a strange character.. vulnerable/exploratory.. as a result…we’re going to see lots w/older too..on current children’s privacy.. every single one of the bills allows data to be collected for criminal justice system.. determining your first moment w/judge… we are fueling a mass incarceration epidemic..companies are not really honest about what they are doing.. are going to have to face the music… i’m more concerned about companies not well known… i’m more concerned about the network info…. ie: address, married, et al… not biggest risk… the worst is being written off opportunities in society.. ie: via your network position.. automated tracking that shape whether you get jobs..i’m worried what you’ve already made visible.. we get distracted by the worst fears rather than the more systemic ones…we’re going to blame poc and we’re going to blame elites.. our ed system.. most people are not actually interacting with each other… we’ve reduced people’s networking ability…military level – privatized.. and college level.. keeping people in silos… so political..what we imagined we could do with social media… compared to the more i look at it.. the more i’m concerned about what we’ve created

i’m loving russia – rather than trying to manipulate/control you…we’ll put out so much info .. you won’t know what’s real..how do we get people’ enough engaged to understand manipulationthe possibility of rapid fire inequality… to be part of a meaningful society.. scares me… the point we can listen and engage others…i want to put us past that fear and get people to.. (dang couldn’t hear)

energy\ness

let’s do this first: free art-ists.

@TimKarr“This conversation is by no means over. It is only just beginning. My hope is…” — @zephoria points.datasociety.net/facebook-must-… pic.twitter.com/yfykZZ6gUHFirst, all systems are biased. There is no such thing as neutrality when it comes to media. That has long been a fiction, one that traditional news media needs and insists on, even as scholars highlight that journalists reveal their biases through everything from small facial twitches to choice of frames and topics of interests. It’s also dangerous to assume that the “solution” is to make sure that “both” sides of an argument are heard equally. This is the source of tremendous conflict around how heated topics like climate change and evolution are covered. It is even more dangerous, however, to think that removing humans and relying more on algorithms and automation will remove this bias.

so .. rather.. let’s design a mech – (ie: hosting life bits)- to help us dance with it.. dissensus ness

Recognizing bias and enabling processes to grapple with it must be part of any curatorial process, algorithmic or otherwise. As we move into the development of algorithmic models to shape editorial decisions and curation, we need to find a sophisticated way of grappling with the biases that shape development, training sets, quality assurance, and error correction, not to mention an explicit act of “human” judgment.

ie: redefine decision making… disengage from consensus..

[..]The key challenge that emerges out of this debate concerns accountability.[..]This conversation is by no means over. It is only just beginning. My hope is that we quickly leave the state of fear and start imagining mechanisms of accountability that we, as a society, can live with. Institutions like Facebook have tremendous power and they can wield that power for good or evil. But for society to function responsibly, there must be checks and balances regardless of the intentions of any one institution or its leader.

Too many folks that I love dearly just want to double down on the approaches they’ve taken and the commitments they’ve made.[..]I am genuinely struggling to figure out how journalists, editors, and news media should respond in an environment in which they are getting gamed.What I do know from 12-steps is that the first step is to admit that you have a problem. And we aren’t there yet. And sadly, that means that good intentions are getting gamed.[..]But, at the end of the day, I’d always end up kicking myself for not imagining a particular use case in my original design and, as a result, *doing a lot more band-aiding than I’d like to admit. T.. vision for all sorts of different future directions that may never come into fruition. That thinking is so key to building anything, whether it be software or a campaign or a policy. And yet, it’s not a muscle that we **train people to develop.

interesting.. the two times i’ve decided to add an accountability page.. from two of my first mentors in all this (George and now danah). getting at the root.. dang.

ℳąhą Bąℓi مها بالي (@Bali_Maha) tweeted at 8:46 AM – 16 Apr 2017 :

Who gets to choose what is acceptable discrimination? Who gets to choose what values+trade-offs are given priority?

https://t.co/y08Xf2UBIi (http://twitter.com/Bali_Maha/status/853620688720195585?s=17)Every decision matters, including the decision to make data open and the decision to collect *certain types of data and not others.

*this is huge.. ginorm/small huge.. perhaps .. we’re collecting/focusing on.. wrong type of data.. for humanity (rather than science of people) sake.. let’s try ie: self-talk as data

When it comes to questions of choice, what is often not discussed is *how public good and individual desire often conflict.

so let’s change *that.. let’s just go there.. ie: deep enough

A huge part of the underlying problem stems from the *limits of the data that are being used

*exactly.. but it’s because we assume man-mades.. ie: schools, jobs, jails

They don’t know who is not in the system and violating the law; they’re only making decisions based on who is there.

this is fractal to not getting deep enough.. ie: maté basic needs

This is what happens *when we simply focus on the available data and limit our purview to that narrow scope. We think we’re doing good by making data available, but what we’re doing is making available data that will **continue structural divisions. Is that our goal?

*spot on.. but go deep er.. otherwise.. **perpetuating our broken feedback loop

The problem with contemporary data analytics is that we’re often categorizing people without providing human readable descriptors.

no.. problem is.. that we’re categorizing people.. we need to stop measuring/validating/comparing.. for (blank)’s sake

Norms and standards of today will seem quaint tomorrow. *We need to prepare for that

*by letting go of accountability ness

I am excited about the possibility and future of using data to make responsible decisions. But today hype dominates public rhetoric about the use of data. We shouldn’t be doing data work just to do data work. We have a responsibility to try to combat inequities and prejudice—with our eyes open to the assumptions and limitations of our work, *and accountability as a goal.

oy.. accountability.. not a goal.. if we’re seek equity: everyone getting a go everyday

I love danah’s replies in this interview. The tear in our social fabric can’t be healed by technology. It’ll be done by us re-creating those social interdependencies that make up society, that engender trust.

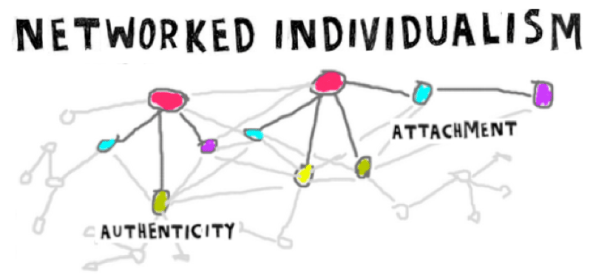

“It’s actually really clear: How do you reknit society? Society is produced by the social connections that are knit together. The stronger those networks, the stronger the society. We have to make a concerted effort to create social ties, social relationships, social networks in the classic sense that allow for strategic bridges across the polis so that people can see themselves as one. “

https://www.wired.com/story/fake-news-social-media-danah-boyd/

Because we can’t quit the products, we become desperate for the companies to save us from ourselves.

We’re not seeing something that is brand new. We’re just distraught because hatred, prejudice, and polarization are now extraordinarily visible

What would it mean to actually understand and seek to remedy the divisions? But I don’t know that that can be done in a financialized way. Actually, I know it can’t be done in a financialized way. I want regulators to work toward rebuilding the networks of America. Not regulate toward fixing an ad.

tech as it could be

danah boyd (@zephoria) tweeted at 9:03 AM – 11 Jan 2018 :

Introducing algo systems into govt decision-making isn’t inherently evil; in practice these tools often magnify inequities while seeking to redress them. Yesterday, I asked you to buy “Automating Inequality” (https://t.co/rhil9Flxa7) Here’s more on why:

https://t.co/PvELrgF19Vhttps://t.co/W0au6l4CDv (http://twitter.com/zephoria/status/951484845670182913?s=17)I don’t know how Eubanks chose her title, but one of the subtle things about her choice is that she’s (unintentionally?) offering a fantastic backronym for AI. Rather than thinking of AI as “artificial intelligence,” Eubanks effectively builds the case for how we should think that AI often means “automating inequality” in practice.

Lyn Hilt (@lynhilt) tweeted at 7:01 AM – 27 Jan 2018 :

“Panicked about Kids’ Addiction to Tech?” by @zephoria #edchat #edtechchat https://t.co/s0PPYl8hDc (http://twitter.com/lynhilt/status/957252234223538176?s=17)

So, here’s what I recommend to parents of small people: Verbalize what you’re doing with your phone.

Missed @zephoria’s keynote at #SXSWEDU? Read or watch the talk here: https://t.co/HclpqIdQUM

Original Tweet: https://twitter.com/datasociety/status/972189275507769344

forgiveness is a beautiful thing but hypocrisy is de stabilizing

2 min – if we ask students to challenge their sacred cows..if we don’t give them a new framework in which to make sense of the world.. others are often there to to do it for us

6 min – what version of media literacy should we be working towards

7 min – why do we value precision in language.. i sat down w gillian tett

8 min – what she (gillian) realized was that people become elite in this country by mastering the language marked as elite..t

9 min – linguistic and communication skills are not universally valued

we are in a culture war.. everyone believes they are part of the resistance..

10 min – so what is this culture war? cory doctorow: .. in the end.. you either have faith that their experts are being truthful.. or you have faith that we are..

who gets to decide what constitutes fact.. in our minds.. we do

11 min – in many native communities.. experience trumps science..

12 min – you cannot resolve fundamental epistemological differences thru compromise

if we’re not careful.. media literacy and critical thinking.. will simply be deployed in the classroom as an assertion of authority over episemology

right now.. all dissolved into .. one truth.. now students are told only one way of knowing.. one accepted world view

13 min – it took me a long time to question my own teachers

16 min – we equate free speech today with the right to be amplified

free speech ness

18 min – we need to realize in us.. media and ed are viewed as enemy.. two institutions that are trying to have power over how people think.. two institutions that are believed to be asserting authority over epistemology

20 min – goal.. dismantle the very foundations of elite epistemological structures that are so deeply rooted in facts and evidence..

21 min – spaces have popped up to encourage asking of difficult questions.. and some spaces.. to lead to certain paths of thinking…

most youth aren’t interested in having the wool pulled over their heads.. no matter how calming.. they can’t do it in a lot of f to f environ.. so they turn to online.. of course.. in many of these communities.. the red pill adds up to how ed and media are stacked against them.. to deceive people.. so.. asked to question more.. rid of politically correct chapels..

24 min – ie: 2012 – church shooter.. this terrorist.. insane.. or rational..? but.. he began by trying to interrogate media coverage of a common story he couldn’t understand.. (zimmerman vs trayvon)

25 min – so .. encourage people to be critical of news media.. when already taught not to trust it.. so.. they’ve been looking for flaws.. but when encouraged to be critical.. come away assuming media has been lying.. and.. leads into these (extremist) environs

27 min – youth in extremist environs.. more skilled at making media than my peers..

28 min – this is not to say we shouldn’t try to ed people.. i don’t want a world of sheeple

aren’t those the same..?

but i also don’t want to naively assume that media literacy can solve our cultural war.. teaching someone these skills may backfire.. recognizing that using skills when they don’t trust you.. may use it in a way that you can’t imagine

29 min – it’s one thing to talk about interrogating assumptions.. or a person has an emotional distance from the object of study..

it’s entirely different to deal w issues when the very act of asking questions is what’s being weaponized..

we’re not dealing w historical propaganda distributed thru mass media.. or an exercise in state power.. this is about making sense of an info landscape.. where the very schools that people use to make sense of the world around them have been strategically perverted by people who believe themselves to be resisting themselves from the same powerful actors that often we think we should be able to critique..

32 min – one of main goals.. to pervert publics thinking.. called gaslighting.. leaves people submissive.. (for domestic violence) advice is to not try to argue.. but to get out.. because once doubt is instilled.. self doubt is really hard to overcome.. can’t tell what reality is.. constantly engaged in a seed of doubt.. unlike domestic violence.. there’s no getting out

37 min – so what to do.. i don’t know.. i am lost.. but at the same time.. i know i just came and scared the heck out of you.. so am going to offer some path forward..

38 min – need to figure out how to develop antibodies.. to help people not be deceived..t

roots of healing.. within.. gershenfeld sel.. to help people not care to deceive

41 min – being able to hold onto your world view while embracing someone else’s

42 min – we cannot and should not get in the game of asserting authority over epistemology.. we need to make certain *our students are more aware of how social interpretation is socially constructed.. to not assume that the answer is to find the facts.. to fact check.. as a formal idea of research

doesn’t that phrase *our students.. assert authority over epistemology in and of itself..?

back to harney domination law

43 min – we need them to understand how a simple fact can be manipulated for a variety of diff purposes.. also grappling with.. how hard it is to resist even if know you’re being manip’d

44 min – i do believe ed has a critical role to play.. but the path forward is not going to allow us to double down on what constitutes a fact.. or teaching people to assess sources.. rebuilding trusts in institutions … but people don’t trust.. and need to realize.. asking them to trust doesn’t work

45 min – over the years.. i’ve seen young people learning how to hack the attention econ.. they saw it as their social playground.. but for some number of them.. *those who were struggling w who they really were.. their id’s .. trying to make sense of the world around them.. those we would normally think of as the most vulnerable youth.. not because vulnerable in a traditional sense.. but because they are lost themselves.. those are the young people who have figured out how to use this to regain power.. they find that power.. not in disrupting your classroom.. but by disrupting the entire info landscape.. gives them a sense of control.. they are not the majority.. but they are in each and everyone of your classrooms.. we need to start paying attention to them..

*roots of healing.. let’s focus on that

46 min – solution above all else.. will require us to deal w the instability we have in this country.. in every aspect.. until we start grappling w this.. i don’t see a way out ..t

ie: hlb via 2 convos that io dance.. as the day..[aka: not part\ial.. for (blank)’s sake…]

47 min – q&a

i don’t know how to get away from this idea of thinking of everyone thinking of themselves as individuals.. a systemic problem.. it’s going to require a system level solution.. how do we get people to embrace this.. and to protect people as networks..

49 min – how do you stitch together networks.. where you can trust..in a sustained way..

2 convos embedded in gershenfeld sel..

if we don’t learn how to support people in mental illness.. i don’t know how anything else can work about it.. we want to normalize in order to use support structure.. but.. we have no support structures in this country..

50 min – so.. we do need to learn how to talk about mental illness.. but more .. we need to figure out how we can support it... this is the challenge w the structure of our country.. believing everyone should be on their own..

54 min – q: how can we use tech to create live in-person communities..

ie: tech as it could be..

a: the original twitter was to connect people at sxsw.. in person.. hash tags were because people wanted to separate into diff rooms in this building.. the whole point was.. in person.. quickly became untenable.. so things get designed in a particular way of use.. then they twist in diff ways

55 min – fb was about in person.. so i think it’s more that some want to do online and some offline..

56 min – i’d argue that the first path is perfectly clear: let young people have more freedom.. that’s pretty simple

i’d say all of us.. ie: do this first

57 min – looking for communities.. of cross generation.. why do we close these walls off when young people most need them.. don’t go stock/friend students.. but when they come to you.. why don’t we allow that.. we age segregate.. one place we don’t is in gaming .. think about ways to stop age segregating..

in the city.. as the day.. all of us

________________

danah boyd (@zephoria) tweeted at 1:24 PM on Mon, Mar 12, 2018:

In what contexts should we assert authority over epistemology? What are the (unintended) consequences of doing so? This, above all else, is where I get nervous about the perverted implementations (not the ideals) of media/news literacy. https://t.co/kPJ4ykRMV9https://t.co/R6acW3KWm9

(https://twitter.com/zephoria/status/973278694893588480?s=03)So I would argue that we need to start developing a networked response to this networked landscape. And it starts by understanding different ways of constructing knowledge.

2 convos embedded in gershenfeld sel.. a nother way

________________

A Few Responses to Criticism of My SXSW-Edu Keynote on Media Literacy

my goal with this talk wasn’t to talk about root causes; it was to challenge a common solution being put forward based on what I’m already seeing play out

What surprised me the most is how few folks really grappled with my primary argument: if we’re not careful, media literacy and critical thinking will be deployed as an assertion of authority over epistemology.

Another line of what I think are VERY appropriate criticisms concerns my recommendations. To be honest, I’m not sure I even believe in them.

For what it’s worth, when I try to untangle the threads to actually address the so-called “fake news” problem, I always end in two places: 1) dismantle financialized capitalism (which is also the root cause of some of the most challenging dynamics of tech companies); 2) reknit the social fabric of society by strategically connecting people. But neither of those are recommendations for educators. <grin>

1\ short bp

2\ 2 convos.. as it could be

_________________

danah boyd (@zephoria) tweeted at 7:10 AM on Fri, Mar 23, 2018:

It seems that folks are getting their heads around what @alicetiara & I were arguing about networked privacy because of Cambridge Analytica. Individual control over data is a complete fiction when it comes to the social world. Your data will be exposed by your friends.

(https://twitter.com/zephoria/status/977170691362623489?s=03)

_________________

danah boyd (@zephoria) tweeted at 2:39 AM on Wed, May 02, 2018:

Look at this turn out for the opening of re:publica! #rp18 Wowsers! My keynote is in 22 minutes and will be live streamed for those kicking yourself for not being here: https://t.co/pUp8KRqFpJhttps://t.co/ha89A7nPIg

(https://twitter.com/zephoria/status/991597995346014208?s=03)ai had become the new big data

my colleague and i – what strategic silence looks like – if you can’t be strategic then be silent

ryan holiday: create spectacle.. then you can control

data by: choice; coercion

it’s not about the algo.. it’s about the process

part 8 – the new bureaucracy: massive reconfigure of social contract.. today’s algo systems are actually an extension of what we understand bureaucracy to be..t

shift moral responsibility..across complex system.. the making of cultural infra.. the way data is configured in ways of controlling larger system.. mechs of regulation can’t look at singular tech.. but need to look at it w/in larger set of ecosystems

we’ve spent the last 100 yrs obsessing about how to create accountability for procedures around bureaucracy but we haven’t figured out how to do this well w/in tech..t

let’s try this.. gershenfeld sel.. via 2 convos.. as it could be

need to acknowledge the ability to regulate bureaucracy has not worked so well thruout history.. t.. and b is something that has been systematically abused at diff points..ie: (kafka, arendt) 63 eichmann trial.. not because he was smart.. but believed himself to be following orders.. the banality of evil.. we should meditate on this.. how is it that it’s not necessarily their intentions but the structure and configuration that causes the pain.. t

data that never really needed to be collected… and so we have inspectors of inspectors et al.. too much.. aka: B and b

we’ve seen dynamics of where b can be mundanely awful to horribly abusive.. i’d say the same algo systems are intro ing that wide range of challenges for us right now

what’s at stake is not just what we can set up legally in terms of a company.. but how we can actually structure the right kinds of norms and cultural structures around it

fear and insecurity are on the rise.. and tech is not the cause.. tech is the amplifier.. it’s mirroring and magnifying the good, bad, & ugly.. what we’re seeing is a new form of vulnerability

Céline Keller (@krustelkram) tweeted at 5:26 AM – 2 May 2018 :

Hacking the attention economy vs. Strategic Silence @zephoria opening keynote #rp18 was a great explanation and summary of what’s important right now.A must, if you missed it, watch out for the recording. #MachineLeaning #Ai #Memes (http://twitter.com/krustelkram/status/991640004085583872?s=17)

_________________

on fourth estate (page has notes/comments)

I wrote an essay for @Medium’s Trust series about “The Messy Fourth Estate.” It’s about my frustration with the current state of journalistic responsibility. Check it out and push back. This is me struggling with a topic out loud. <grin> https://t.co/RPajuHXdKzhttps://t.co/b4OTyE6E5V

Original Tweet: https://twitter.com/zephoria/status/1009565722882793472

________________

danah boyd (@zephoria) tweeted at 8:06 AM on Tue, Jul 31, 2018:

When youth are depressed, social media makes some feel better and some feel worse. But when they’re struggling, many of them are turning to tech for information/support. (Is info out there high quality?) New survey data by @vjrideout & @SusannahFox: https://t.co/V4dwGWhOcxhttps://t.co/cuSOWOTNxx

(https://twitter.com/zephoria/status/1024295343570206720?s=03)‘most of national dialogue around young people & tech has been about health risks rather than health promotion’ @vjrideout @SusannahFox

let’s focus on that.. get to roots of healing via mech to listen to & facil each voice.. everyday ie: 2 convos

‘we need to ask ourselves whether the rest of us are doing our part to help meet their needs’ @vjrideout @SusannahFox

imagine a mech to facil maté basic needs.. for all of us (has to be all of us)

_________________

eff award and climbing the ladder

danah boyd (@zephoria) tweeted at 11:22 AM on Thu, Sep 12, 2019:

Tonight I will be receiving an award from @EFF, where I will grapple with the events and people who helped me get where I am today, alongside the ongoing costs of patriarchy. If you’re in SF, please join me: https://t.co/2YroMAIzer

(https://twitter.com/zephoria/status/1172198783804817408?s=03)

_________________

_________________

_________________

_________________

_________________

an exchange early on (2009 ish?)..

from me to danah:

hey danah. thank you for the quick response.

i’ve been involved in a lot of conversations about filtering the web for public school. in my district, on twitter, #edchat, etc.

currently i’m thinking we should have no filter.

i think if we avoid the places kids can access outside of school per cipa – we are not doing all we can for safety.

i think we wait too long for everyone to get on board – to feel comfortable with change – especially in ed. i sense that now with filtering – and it’s the kids that lose.

i need your sound advice. i apologize for cutting to the chase – meaning to you – but i can’t find a reason that makes sense to me to filter.

reasons i’ve been given are:

1. safety – i don’t see filtering as the safest way.

2. money – because of #1 – i don’t think following cipa guidelines is worth the money

head me off at the pass sweet – i’m becoming wreckless to

her brilliant response:

In general, I agree with you, provided that you have school monitors in your computer room. I think that teacher monitors (and parents) being present serve as much more effective filters than technology. Shaming is much more effective than blocking. And if you’re not providing a hurdle for students to circumnavigate, you end up reducing the rebellion effect. But the key is to have present adults who are willing and able to push back at students. I do think that educators can and should help youth safely navigate all of the sites that they go to, learn to look out for signs of problems (like phishing scams) and, in general, be a source of media literacy education. But I know it’s tough and a lot of educators aren’t prepared to do it. So they hope it’ll all go away.

Good luck with recklessly changing ed!! It needs to happen!

danah

me back:

thank you danah. so sweet of you to take the time.

insight that i needed. wasn’t even thinking of using parents as filters.

as i anticipated – your wording is so clear. and with no wreckless spelling.

26 kids and i are working on unboxing ed – logging it all on our site. would you mind if i posted this email exchange there – with our morphing thoughts of web use?

so you don’t have to write back – i’ll assume you’re ok with it if i don’t hear from you soon.

danah:

by all means!

_____________________

i resonate and love the story of capital letters.

to me they are screaming.