neural networks

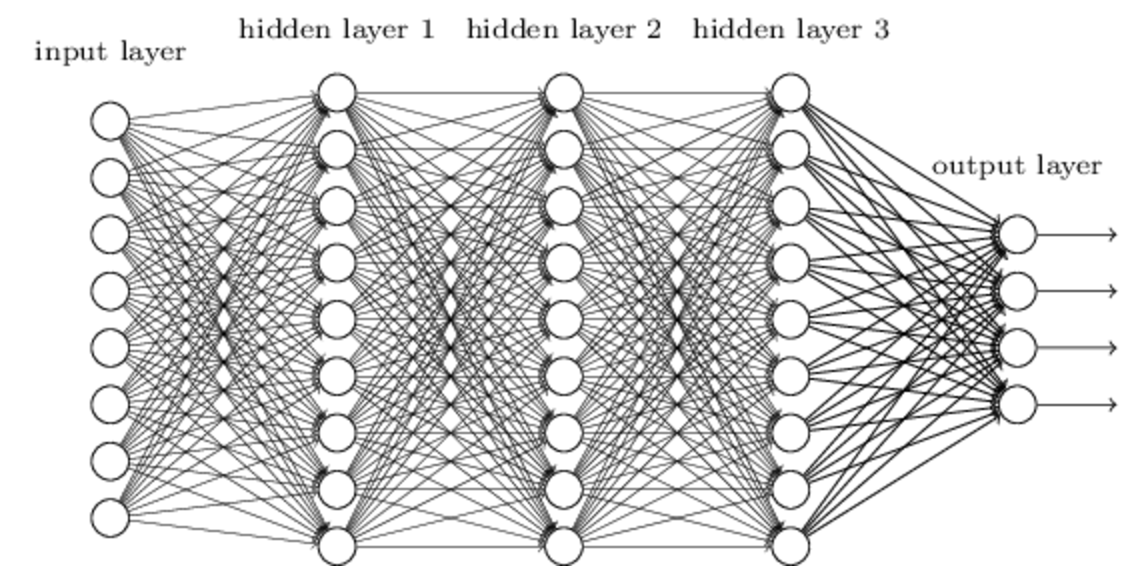

“Neural network” redirects here. For networks of living neurons, see Biological neural network. For the journal, see Neural Networks (journal). For the evolutionary concept, see Neutral network (evolution).“Neural computation” redirects here. For the journal, see Neural Computation (journal).Neural networks (also referred to as connectionist systems) are a computational approach which is based on a large collection of neural units loosely modeling the way a biological brain solves problems with large clusters of biological neurons connected by axons. Each neural unit is connected with many others, and links can be enforcing or inhibitory in their effect on the activation state of connected neural units. Each individual neural unit may have a summation function which combines the values of all its inputs together. There may be a threshold function or limiting function on each connection and on the unit itself such that it must surpass it before it can propagate to other neurons. These systems are self-learning and trained rather than explicitly programmed and excel in areas where the solution or feature detection is difficult to express in a traditional computer program.

Neural networks typically consist of multiple layers or a cube design, and the signal path traverses from front to back. Back propagation is where the forward stimulation is used to reset weights on the “front” neural units and this is sometimes done in combination with training where the correct result is known. More modern networks are a bit more free flowing in terms of stimulation and inhibition with connections interacting in a much more chaotic and complex fashion. Dynamic neural networks are the most advanced in that they dynamically can, based on rules, form new connections and even new neural units while disabling others.

The goal of the neural network is to solve problems in the same way that the human brain would, although several neural networks are much more abstract. Modern neural network projects typically work with a few thousand to a few million neural units and millions of connections, which is still several orders of magnitude less complex than the human brain and closer to the computing power of a worm.

New brain research often stimulates new patterns in neural networks. One new approach is using connections which span much further and link processing layers rather than always being localized to adjacent neurons. Other research being explored with the different types of signal over time that axons propagate which is more complex than simply on or off.

Neural networks are based on real numbers, with the value of the core and of the axon typically being a representation between 0.0 and 1.

An interesting facet of these systems is that they are unpredictable in their success with self learning. After training some become great problem solvers and others don’t perform as well. In order to train them several thousand cycles of interaction typically occur.

Like other machine learning methods – systems that learn from data – neural networks have been used to solve a wide variety of tasks, like computer vision and speech recognition, that are hard to solve using ordinary rule-based programming.

Historically, the use of neural network models marked a directional shift in the late eighties from high-level (symbolic) artificial intelligence, characterized by expert systems with knowledge embodied in if-then rules, to low-level (sub-symbolic) machine learning, characterized by knowledge embodied in the parameters of a dynamical system.

[..]

Neural networks and neuroscience

Theoretical and computational neuroscience is the field concerned with the theoretical analysis and the computational modeling of biological neural systems. Since neural systems are intimately related to cognitive processes and behavior, the field is closely related to cognitive and behavioral modeling.

The aim of the field is to create models of biological neural systems in order to understand how biological systems work. To gain this understanding, neuroscientists strive to make a link between observed biological processes (data), biologically plausible mechanisms for neural processing and learning (biological neural network models) and theory (statistical learning theory and information theory).

Types of models

Many models are used in the field, defined at different levels of abstraction and modeling different aspects of neural systems. They range from models of the short-term behavior of individual neurons (e.g.), models of how the dynamics of neural circuitry arise from interactions between individual neurons and finally to models of how behavior can arise from abstract neural modules that represent complete subsystems. These include models of the long-term, and short-term plasticity, of neural systems and their relations to learning and memory from the individual neuron to the system level.

Networks with memory

Integrating external memory components with artificial neural networks has a long history dating back to early research in distributed representations and self-organizing maps. E.g. in sparse distributed memory the patterns encoded by neural networks are used as memory addresses for content-addressable memory, with “neurons” essentially serving as address encoders and decoders.

More recently deep learning was shown to be useful in semantic hashing where a deep graphical model of the word-count vectors is obtained from a large set of documents. Documents are mapped to memory addresses in such a way that semantically similar documents are located at nearby addresses. Documents similar to a query document can then be found by simply accessing all the addresses that differ by only a few bits from the address of the query document.

Memory Networks is another extension to neural networks incorporating long-term memory which was developed by Facebook research. The long-term memory can be read and written to, with the goal of using it for prediction. These models have been applied in the context of question answering (QA) where the long-term memory effectively acts as a (dynamic) knowledge base, and the output is a textual response.

Neural Turing Machines developed by Google DeepMind extend the capabilities of deep neural networks by coupling them to external memory resources, which they can interact with by attentional processes. The combined system is analogous to a Turing Machine but is differentiable end-to-end, allowing it to be efficiently trained with gradient descent. Preliminary results demonstrate that Neural Turing Machines can infer simple algorithms such as copying, sorting, and associative recall from input and output examples.

Differentiable neural computers (DNC) are an extension of Neural Turing Machines, also from DeepMind. They have out-performed Neural turing machines, Long short-term memory systems and memory networks on sequence-processing tasks.

_______

benjamin bratton et al

networked individualism et al

________

Sharon Goldwater on tranlsating w/o transcribing via neural networks

New Scientist (@newscientist) tweeted at 5:36 AM – 4 Apr 2017 :

Google uses neural networks to translate without transcribing https://t.co/glRhZ1pbIJ https://t.co/2Z76KWNsGV(http://twitter.com/newscientist/status/849224178020626432?s=17)

Machine translation of speech normally works by first converting it into text, then translating that into text in another language. But any error in speech recognition will lead to an error in transcription and a mistake in the translation.

Researchers at Google Brain, the tech giant’s deep learning research arm, have turned to *neural networks to cut out the middle step. By skipping transcription, the approach could potentially allow for more accurate and quicker translations

The system could be particularly useful for translating speech in languages that are spoken by very few people, says Sharon Goldwater at the University of Edinburgh in the UK.

*ie: idiosyncratic jargon

need 1st/most: means (nonjudgmental expo labeling) to undo hierarchical listening as global detox so we can org around legit needs.. all the voices.. via tech as it could be

International disaster relief teams, for instance, could use it to quickly put together a translation system to communicate with people they are trying to assist. When an earthquake hit Haiti in 2010, says Goldwater, there was no translation software available for Haitian Creole.

perhaps even self-talk.. so no one needs to train.. or whatever.. to participate in 2 convos.. as the day

Goldwater’s team is using a similar method to translate speech from Arapaho, a language spoken by only 1000 or so people in the Native American tribe of the same name, and Ainu, a language spoken by a handful of people in Japan.

Rare languages

The system could also be used to translate languages that are rarely written down, since it doesn’t require a written version of the source language to produce successful translations.

[..]

And text translation service Google Translate already uses neural networks on its most popular language pairs, which lets it analyse entire sentences at once to figure out the best written translation. Intriguingly, this system appears to use an “interlingua” – a common representation of sentences that have the same meaning in different languages – to translate from one language to another, meaning

it could translate between a language pair it hasn’t explicitly been trained on.

The Google Brain researchers suggest the new speech-to-text approach may also be able to produce a system that can translate multiple languages.

like 8 bn.. toward a nother way to live..

But while machine translation keeps improving, it’s difficult to tell how neural networks are coming to their solutions, says Bahdanau. “It’s very hard to understand what’s happening ins

nothing to date has gotten to the root of problem

legit freedom will only happen if it’s all of us.. and in order to be all of us.. has to be sans any form of measuring, accounting, people telling other people what to do

how we gather in a space is huge.. need to try spaces of permission where people have nothing to prove to facil curiosity over decision making.. because the finite set of choices of decision making is unmooring us.. keeping us from us..

ie: imagine if we listen to the itch-in-8b-souls 1st thing everyday & use that data to connect us (tech as it could be.. ai as augmenting interconnectedness)

the thing we’ve not yet tried/seen: the unconditional part of left to own devices ness

[‘in an undisturbed ecosystem ..the individual left to its own devices.. serves the whole’ –dana meadows]

there’s a legit use of tech (nonjudgmental exponential labeling) to facil the seeming chaos of a global detox leap/dance.. for (blank)’s sake..

ie: whatever for a year.. a legit sabbatical ish transition

otherwise we’ll keep perpetuating the same song.. the whac-a-mole-ing ness of sea world.. of not-us ness

___________

___________

_________

_________